Going AI-Native: Don't Ask If. Ask Where.

A four-zone framework and operational model for intentional AI adoption.

Apr 16, 2026

Originally posted on Substack.

Every org is pushing to become "AI-native." Training weeks. Hackathons. Mandates to integrate AI into every workflow. In some cases, AI-driven hiring freezes and lay-offs.

For engineering, the path is relatively clear: tools like Claude Code have obvious, high-adoption use cases. As a result, engineering's AI-driven speed gains have raised the bar for everyone around them: PMs and designers are now expected to move just as fast, but without the same clarity on how. Leaders are running AI transformation programs often without a clear framework for what kind of work should actually change. The result is a uniform push across all work types, regardless of whether AI is the right fit.

This feels like how organizations went head-first into Agile some two decades ago: Scrum for everything, applied uniformly across teams, products, and work types, regardless of whether it fit (spoiler: some products shouldn't use Agile). Most never course-corrected... orgs rigidly stuck with Scrum or abandoned it entirely. Very few paused to ask where the methodology actually helped and where it didn't. AI adoption seems to be following the same trend.

Instead of just asking how to go AI-native, orgs should also be asking where.

To a degree, the answer to the question "where" has been voiced. Paul Graham argues taste becomes more important when anyone can make anything. Sam Altman tells job seekers to develop taste as their edge. Rick Rubin — who admits he has "no technical ability" and barely touches the mixing board, but has legendary judgment — has become the archetype for what the future knowledge worker looks like.

The implication: AI replaces routine, objective, technical work first. Taste, creative, subjective, judgment-heavy work survives. The logic feels reasonable: if there's a right answer, AI can find it. If the work requires human taste — aesthetic sensibility, creative vision, the intangible "I know it when I see it" — then humans stay essential. This framing isn't wrong, but it's incomplete.

Creativity Isn't the Moat Everyone Says It Is

Taste and judgment matter enormously. They're just not the only axis that determines where AI takes hold, or more importantly, where it doesn't.

Ethan Mollick's research at Wharton found that GPT-4 outperformed 91% of humans on standard creativity tests. In a separate business idea generation study, 35 of the top 40 ideas came from AI rather than people. Meanwhile, the roles actually being cut first are creative ones: illustrators down 33%, photographers down 28%, writers down 28%. If "creative" work were truly the last to fall, these numbers should be moving in the opposite direction.

AI eats whatever the organization treats as "good enough." Creative work is no exception.

Consider design. Design is divergent work: open-ended, no single right answer. But when an organization consistently settles for "good enough" design, AI fills that gap easily. Canva built a $42 billion company on the insight that most organizations don't need the best or even dedicated designers, they just needed design done. AI just accelerates the trade-off Canva already validated.

Now consider strategy. Strategy is also divergent work, but organizations never settle. They fight for every point of margin, every share of market, every quarter of growth. No leadership team looks at its competitive position and calls it "good enough"; the bar keeps moving because the stakes are existential.

The sorting mechanism is the organization's actual commitment to excellence and continuous improvement in that area, rather than whether the work is creative-vs-technical or subjective-vs-objective.

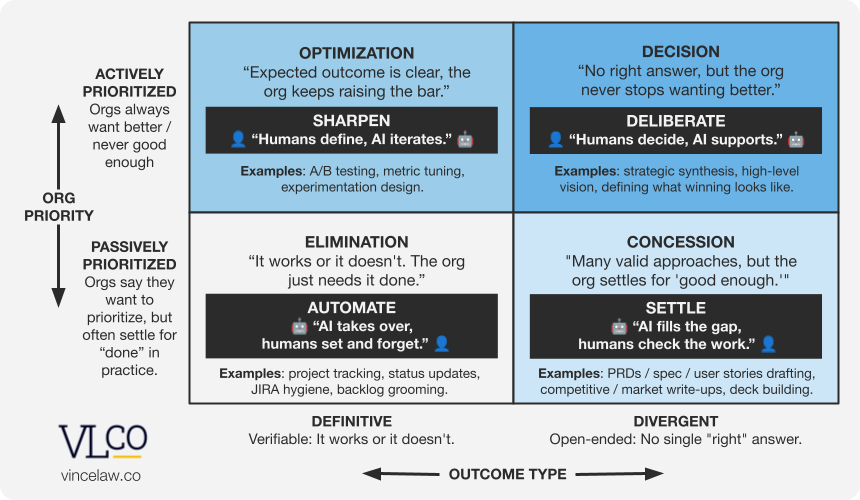

The AI Adoption Priority Matrix

Here is a way to make this sorting mechanism visible.

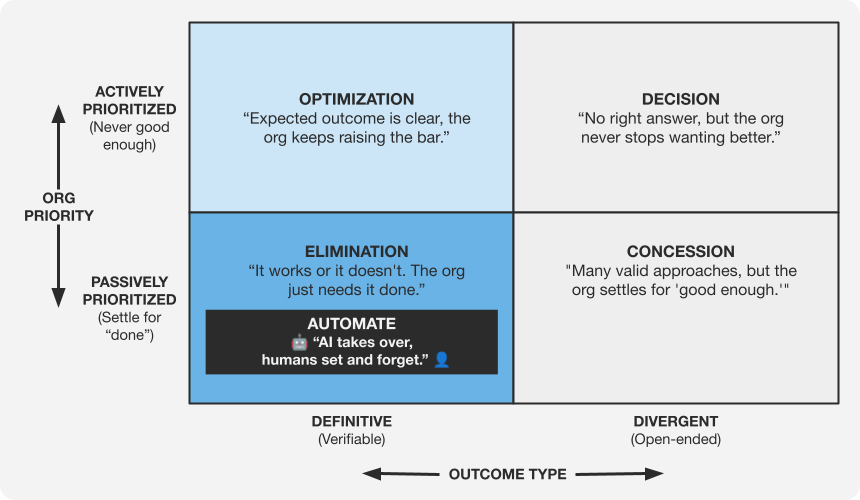

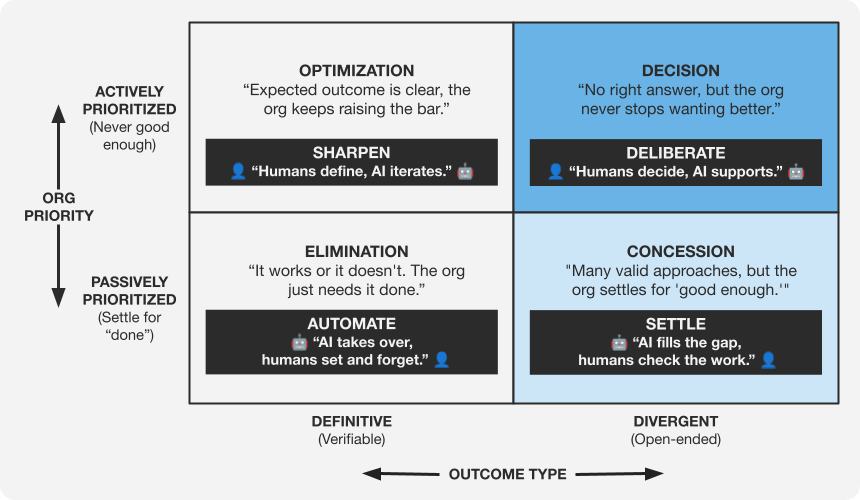

Consider a 2x2 that maps any type of work along two dimensions:

The X-axis: Outcome Type.

- Definitive — This is verifiable: it works or it doesn't; the solution converges to a single correct answer. A test passes, a migration completes, an endpoint returns the expected response.

- Divergent — This is open-ended: no single "right" answer, though some answers are objectively better than others. There are near-infinite product strategies, and while some can be deemed better than others, there's no definitive "best."

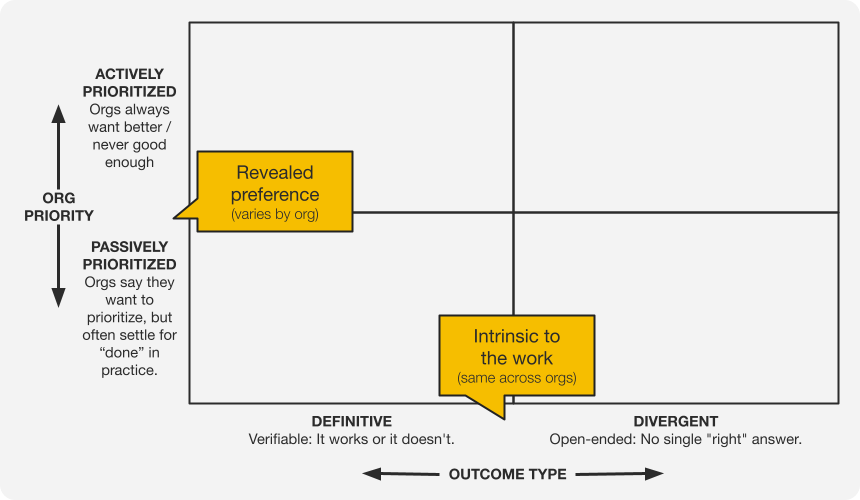

The Y-axis: Organizational Priority.

- Actively Prioritized — The organization demands excellence and continuous improvement here. There is no ceiling. The bar keeps rising.

- Passively Prioritized — The organization may say it cares, but in practice settles for "done" when competing priorities arise. Not explicitly deprioritized, just quietly allowed to slide. The distinction matters: this axis measures revealed preferences, not stated ones.

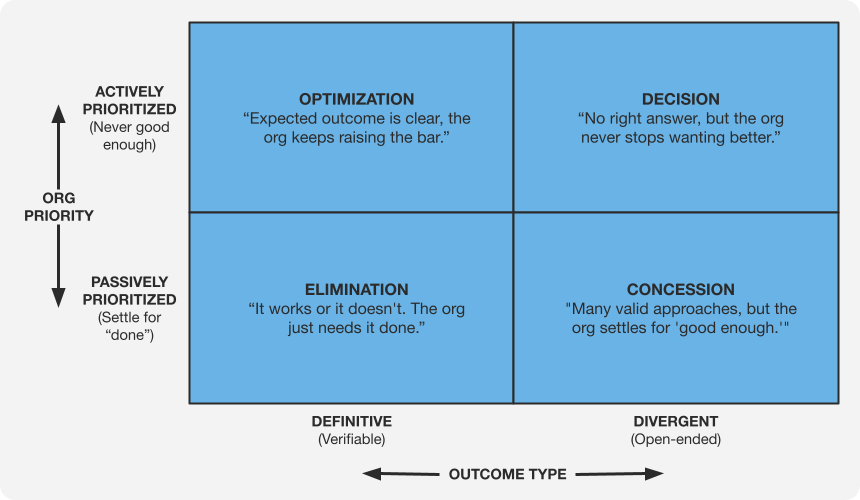

These two dimensions create four zones:

- Elimination (Definitive + Passively Prioritized): "It works or it doesn't. The org just needs it done."

- Optimization (Definitive + Actively Prioritized): "Expected outcome is clear, the org keeps raising the bar."

- Concession (Divergent + Passively Prioritized): "Many valid approaches, but the org settles for 'good enough.'"

- Decision (Divergent + Actively Prioritized): "No right answer, but the org never stops wanting better."

Where AI Actually Fits: The Operating Model

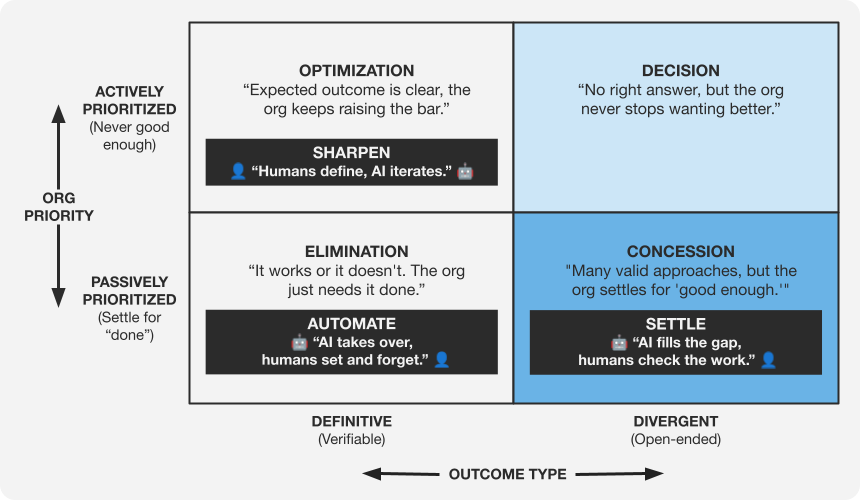

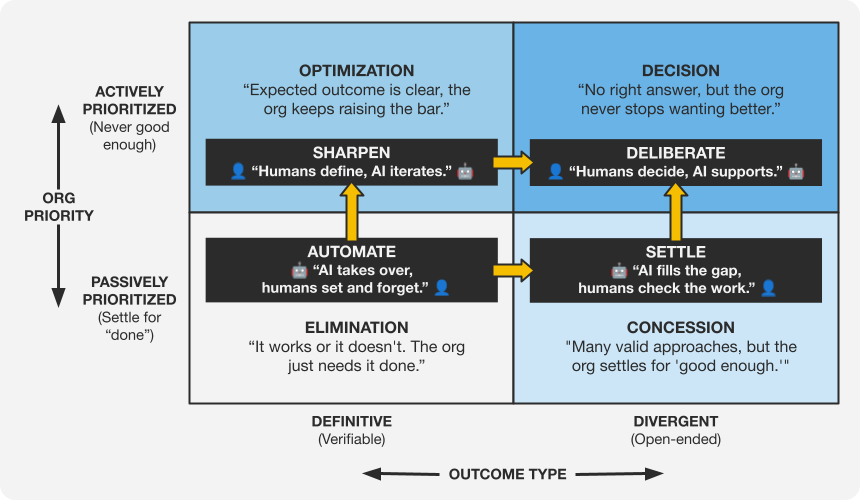

Each zone maps to a different approach to how humans and AI collaborate, creating an operating model across a spectrum. As you move from Elimination toward Decision, AI's role shrinks from owning the entire job to providing inputs; the human's role grows from not needing to look at the output to owning the outcome.

Most organizations already know critical decisions need human judgment. The subtler trap: "AI-native" programs borrow one operating model from Elimination — AI does the work, humans step aside — and quietly use it as the measuring stick for every other zone. That's the Elimination Trap.

Avoiding the trap means picking the right operating model for each zone, not the most aggressive one. Here's a breakdown of the dynamics and operating model within each zone:

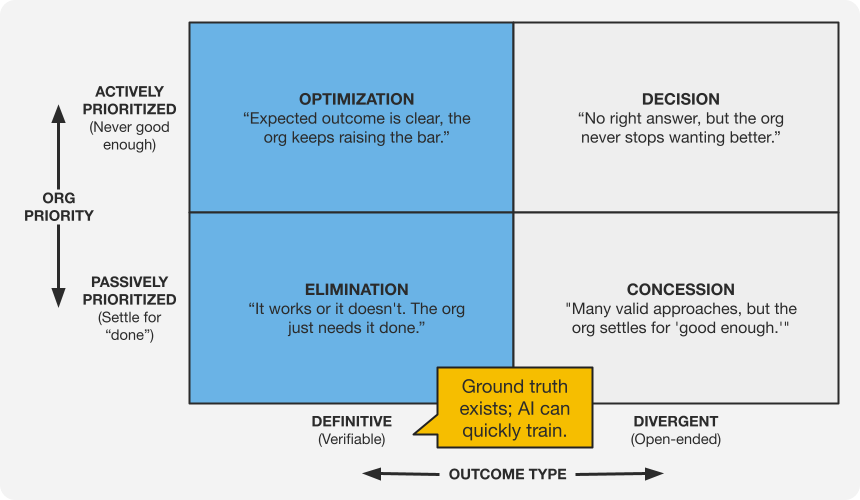

The Definitive Column

The left side of the matrix is where the work has a defined "right" answer — the organization knows what "works," what "done" looks like — and the only questions are how much, and how fast to get there.

This is also where AI improves fastest. Defined right answers give neural networks clean training signal — right-and-wrong examples, reliable evals, measurable benchmarks. When ground truth exists, models train against it and improve systematically. The feedback loop is tight, and it compounds.

Zone 1: Elimination → Automate. AI takes over. Not "AI assists." AI owns it end-to-end, ideally without a human even reviewing the output. This is where agentic AI gets pushed hardest, and where the efficiency gains are real and uncontroversial.

For PMs — project tracking, status updates, JIRA hygiene, and backlog grooming. For engineering — a narrower set of clear cases: scaffolding and boilerplate. For design — a similarly narrow set: template-driven production assets.

The common thread is that orgs just need it done, and AI can get it done.

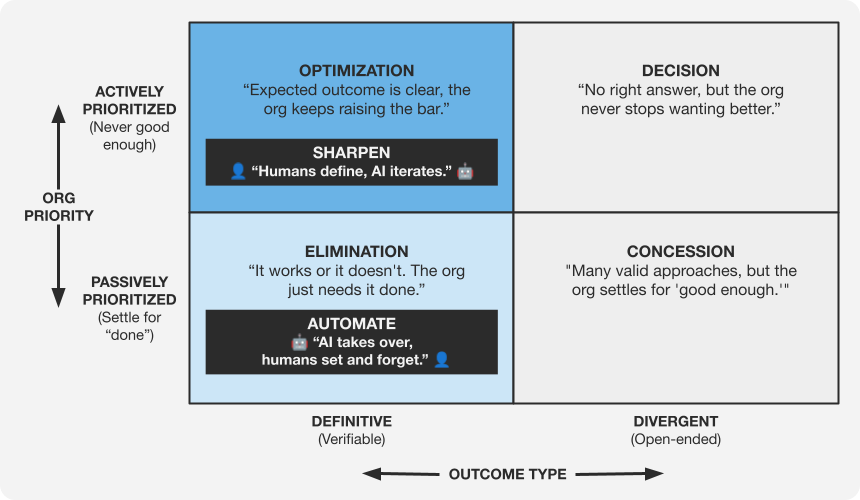

Zone 2: Optimization → Sharpen. Human sets the expected outcome, AI iterates toward it. This zone is AI-augmented, not AI-led; the human defines what "better" means and pushes AI to get there.

For PMs — A/B testing, metric tuning, and experimentation design. For engineering — performance and reliability work: another 10ms, another nine of uptime, against benchmarks the organization keeps raising. For design — accessibility compliance, one of the few areas with explicit, measurable standards that rise over time.

The PM zone is already showing what relatively autonomous AI optimization looks like in practice. Anthropic's head of growth Amol Avasare has described their internal tool CASH (Claude Accelerates Sustainable Hypergrowth) autonomously running the full experimentation loop — identifying opportunities, building features, running tests, analyzing results — currently on small edits like copy variations and UI changes (LinkedIn summary, full episode on Lenny's Podcast). His honest assessment: "the win rate is like a junior PM, I would expect a senior PM to do better." The human still defines what "better" means — conversion, activation, retention — but AI is running the loop against that bar.

The work in this zone has clear benchmarks, and the organization keeps raising them. AI sharpens the work against those benchmarks, but humans still decide which benchmarks matter.

Because the bar keeps rising, AI's speed gains don't get converted into layoffs. They get converted into output.

Engineering is the visible proof. The "end of engineers" narrative predicted layoffs that haven't come. The analysis earlier that showed illustrators, photographers, and writers down 28–33% found engineering roles holding steady or growing — director- and VP-level roles up year-over-year. Orgs are spending the new speed on more opportunities, not on smaller teams.

When work is actively prioritized, AI doesn't mean half the humans doing the same work. It means the same humans doing twice the work.

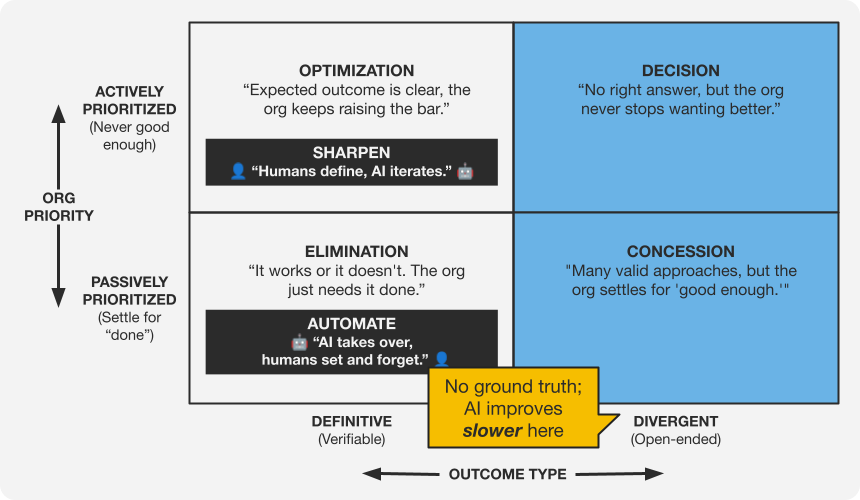

The Divergent Column

The Divergent side of the matrix is where AI adoption gets interesting. AI does "good enough" quickly on divergent work; that's what powers the Concession zone. But closing the gap to excellent — the quality bar that defines the Decision zone — moves slowly, for two related reasons:

- AI can't train on it cleanly. Definitive work is trainable: clean right-and-wrong examples, reliable evals, measurable progress. Divergent work doesn't offer those anchors. Without ground truth, there's no training set to optimize against and no way to measure whether the model has actually improved. The indeterminate nature of the work is its own moat.

- Humans hold a durable advantage in accumulated experience. Good judgment on divergent work is built from years of context and scars that AI doesn't have (yet). What makes this whole side of the matrix more "AI-resistant" is that the judgment required comes from a record of what worked and what didn't, and that record of lived experiences isn't in the training data.

AI is coming for this side of the matrix too, but only up to "good enough." The climb to "excellent" is much slower.

Zone 3: Concession → Settle (For Speed and Scale). AI fills the gap. And it fills it fast. This zone is where AI's speed advantage is hardest to argue with. AI output here lands at passable, not exceptional. But it lands quickly and at scale. The trade-off is craft for speed and scale, and in this zone, those attributes win on their own merits. The real question in this zone isn't whether AI can do the work better than a dedicated human, but whether AI is good enough for this specific work. When it is, the economics make the case itself.

For PMs — PRD and spec drafting, competitive and market write-ups, and deck building, where AI can produce imperfect but usable drafts in seconds. For engineering — code documentation, where AI can generate docs directly from the code it's already seen. For design — the long tail of screens (empty states, error messages, admin UIs), where AI can produce workable patterns faster than a design team can staff them.

Design sits in a particularly tight spot here. Designers have lived the gap between stated and revealed priority even before AI... organizations say they value design, but when deadlines loom, it ships at "good enough." AI widens that gap. As engineering moves faster, the cadence compresses faster than the design process can stretch to match, and designers are often not in the room when the pace is set. (Note: This is not a commentary on design's value, but where the revealed gap runs widest.)

This is the zone that rewards thoughtful reflection. Every piece of work you deliberately concede to AI here frees up time and attention for the decisions that genuinely need you. When done well, Concession is a purposeful, strategic choice about where your energy belongs. The test that follows helps you sort what to let go of intentionally from what's still worth fighting for.

The Concession Smell Test. Three questions to pressure-test at the organizational level:

1. Priority. Has the organization already been operating at "good enough" on this work, with or without AI? Measured by revealed preference, shaped by culture, budgets, and what actually gets funded when trade-offs are forced. This is the 2x2's Y-axis made specific: passively prioritized in practice, regardless of what leadership says on the Careers page.

2. Fit. Does AI output clear that bar? Not the aspirational version of the work. The operational one the organization is already accepting. If the baseline has been X and AI delivers X, the switch is on the table.

3. Speed. Does the speed advantage create enough reclaimed capacity to redirect toward higher-leverage work? AI is almost always faster. That's not the test. The test is whether the volume and recurrence of the work turn that speed into organizational capacity you can actually redirect. Saving ten minutes on a one-off is invisible. Saving ten hours per week on recurring work shifts what the organization can do.

If #1 is no — the work isn't Concession. It belongs in Optimization or Decision, where the organization is actually willing to invest.

If #2 is no — AI isn't ready for this work yet. Revisit as capability improves.

If #3 is no — the speed gain doesn't create redirectable capacity. The work stays where it is until volume or recurrence justifies the switch.

Zone 4: Decision → Deliberate. Human owns the thinking, but AI earns its keep on the front end. Used well, AI can accelerate research, broaden the brainstorm, and surface better data to inform the call. These are real leverage in a zone where better inputs produce better decisions. What AI doesn't do is make the call itself. AI is a tool in service of the decision, not the decision-maker.

For PMs — strategic synthesis, high-level vision, and defining what winning looks like. For engineering — technical vision and platform-level bets, the calls whose downstream consequences take years to play out. For design — experience vision and defining what "great" feels like for the product.

As most PMs and leaders know, what shapes a divergent decision are human-to-human experiences, discussions, and friction not captured by AI (yet).

In a world where AI makes everything fast, the Decision zone is where speed is not the primary vector of optimization. The organization is paying for judgment and for access to the signal that never got written down.

As work moves from Elimination toward Decision, AI's role shrinks from doing the entire job to providing inputs. The human's role grows from no intervention to glancing at output to owning the outcome. Seeing this gradient — from full automation to full human ownership — is the core value of the framework.

It's About Priority.

The through-line across all four zones is what the organization actually fights for vs. what it quietly concedes.

Work has a hard floor. Even at AI's efficiency ceiling, it doesn't compress to zero. The real question is which work the organization demands continuous improvement on, and which work it allows to plateau. Every organization says it values design, developer experience, documentation, and spec quality. The Y-axis measures what organizations do when resources are scarce and trade-offs are real, not what they say.

That revealed preference — not the nature of the work itself — is the actual sorting mechanism. It determines whether AI replaces the work entirely, augments the person doing it, or simply provides inputs to a human decision-maker.

What This Means for You

Map your own work to the matrix. Not the generic examples above. Your actual work, in your actual org. The same task lands in different zones depending on context.

PRD writing is Concession at an org where specs aren't read, but it could be Optimization at a company where spec quality is a cultural cornerstone. Similarly, code documentation is Concession at most startups, but Optimization at a company like Stripe where developer docs are the developer experience. The framework is a lens, not a prescription. The quadrant labels describe typical placements, but only you know where your organization actually prioritizes.

Three questions worth sitting with:

Where are you spending human energy on Elimination-zone work? If you're still manually grooming backlogs, writing status updates by hand, or building boilerplate from scratch, that's human effort directed at work AI should own entirely. The real cost is the Decision-zone work that isn't getting done instead.

What work are you needlessly fighting for that you should concede for speed? Apply the smell test. If the org has already been operating at "good enough" on this work (Priority), AI output clears that bar (Fit), and the volume creates redirectable capacity (Speed): you're spending human energy defending a line the organization already moved. Conceding here reclaims hours for the Decision-zone work only you can do.

Where is AI creeping into your Decision zone? The pressure to "use AI for everything" doesn't exempt strategic work. If AI is drafting product strategy and the human role has been reduced to rubber-stamping, the organization has automated the one function it actually needs humans to own. For PMs, this is already happening through a different mechanism: when engineering moves at AI speed, the PM who's still optimizing the backlog isn't in the room when the actual decisions get made. Prioritization was the PM's leverage when engineering capacity was the constraint. When that constraint changes, staying in the Elimination zone — managing tickets faster, writing specs more efficiently — means ceding the Decision zone by default.

Where the Human Premium Lives

The direction of value is upward and to the right. As AI takes over the bottom half of the matrix and the Definitive column, the human premium concentrates in work that is both divergent and actively prioritized: work where the answer isn't obvious and the organization never stops demanding better.

The framework is how you answer where: what to build with AI, what to augment, and what to let go.

The Hard Part

Answering where is necessary. Executing on it is the hard part.

The shift from organic AI application to intentional AI adoption requires support structures most organizations haven't invested in yet. Training that goes beyond "how to prompt" and into "how to evaluate AI output in your specific domain." Management practices that distinguish between zones rather than treating all AI use the same way. Culture that is honest about what the organization actually prioritizes, not what it claims to prioritize on the careers page.

The organizations that get this right won't be the fastest to adopt AI. They'll be the most intentional about where they adopt it. And that distinction matters more than most leaders realize.

Translating this framework from theory into reality takes organizational muscle. If your team is ready to do that work and you need a thought partner to help guide the transition, let's talk.